Science

I am interested in understanding the principles of intelligence both in artificial and biological agents or agentic systems. I have worked on Bayesian and reinforcement learning models of decision-making and planning in agents. More recently, I have investigated the underlying mechanisms of generalisable and compositional reasoning, both in the context of understanding the representations that allow agents to generalise to novel sitations, and in terms of online and offline mechanisms that underlie compositional inference.

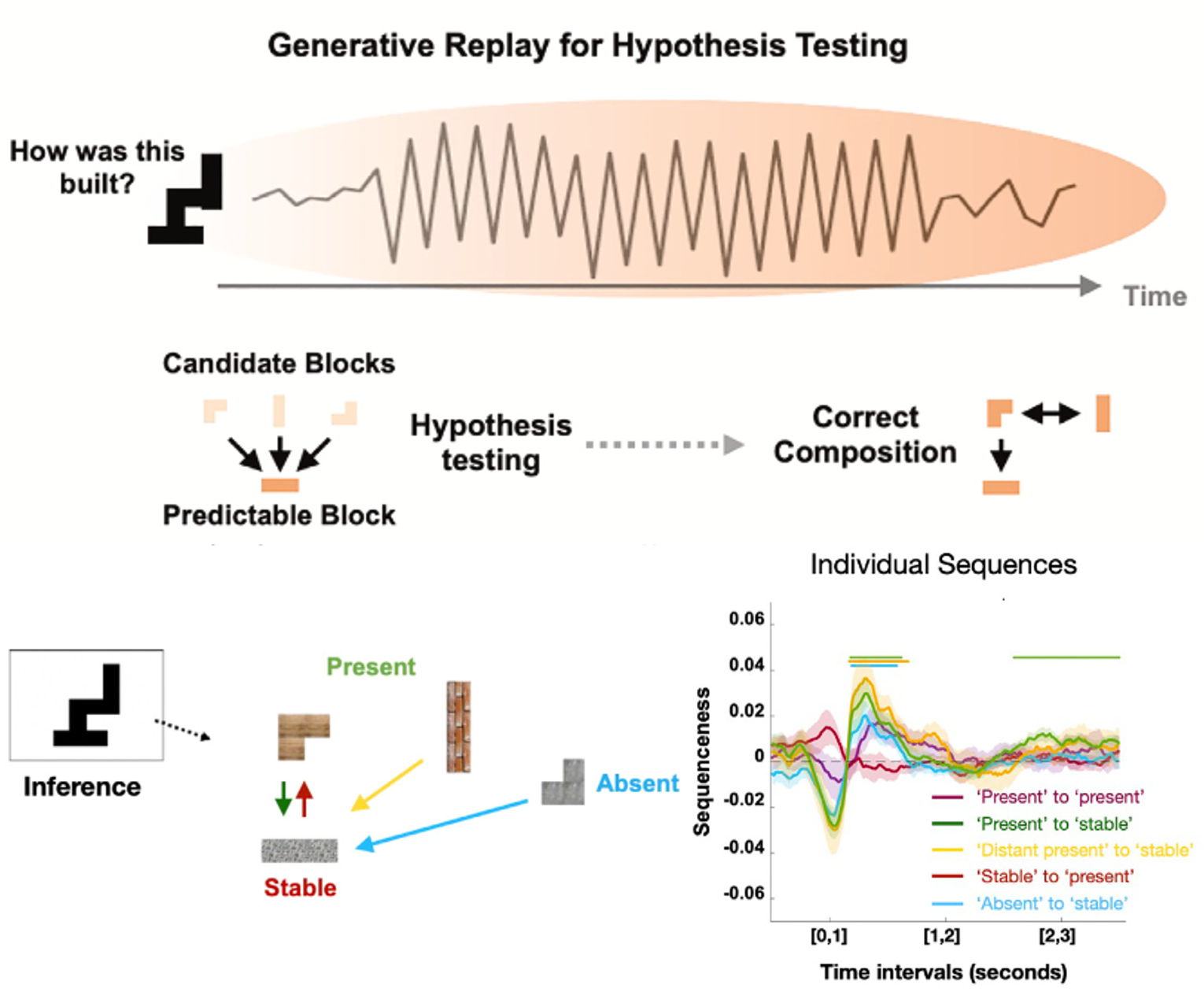

Online compositional replay

Online neural replay facilitates compositional inference by using learnt statistical structure to guide uncertainty resolution. When faced with a novel problem, neural replay reflects a hypothesis-testing mechanism that gradually resolves uncertainty from more to less predictable building blocks (Schwartenbeck et. al., Cell 2023). Similar mechanisms might be at play during language generation (Kurth-Nelson, ..., Schwartenbeck, Neuron 2023).

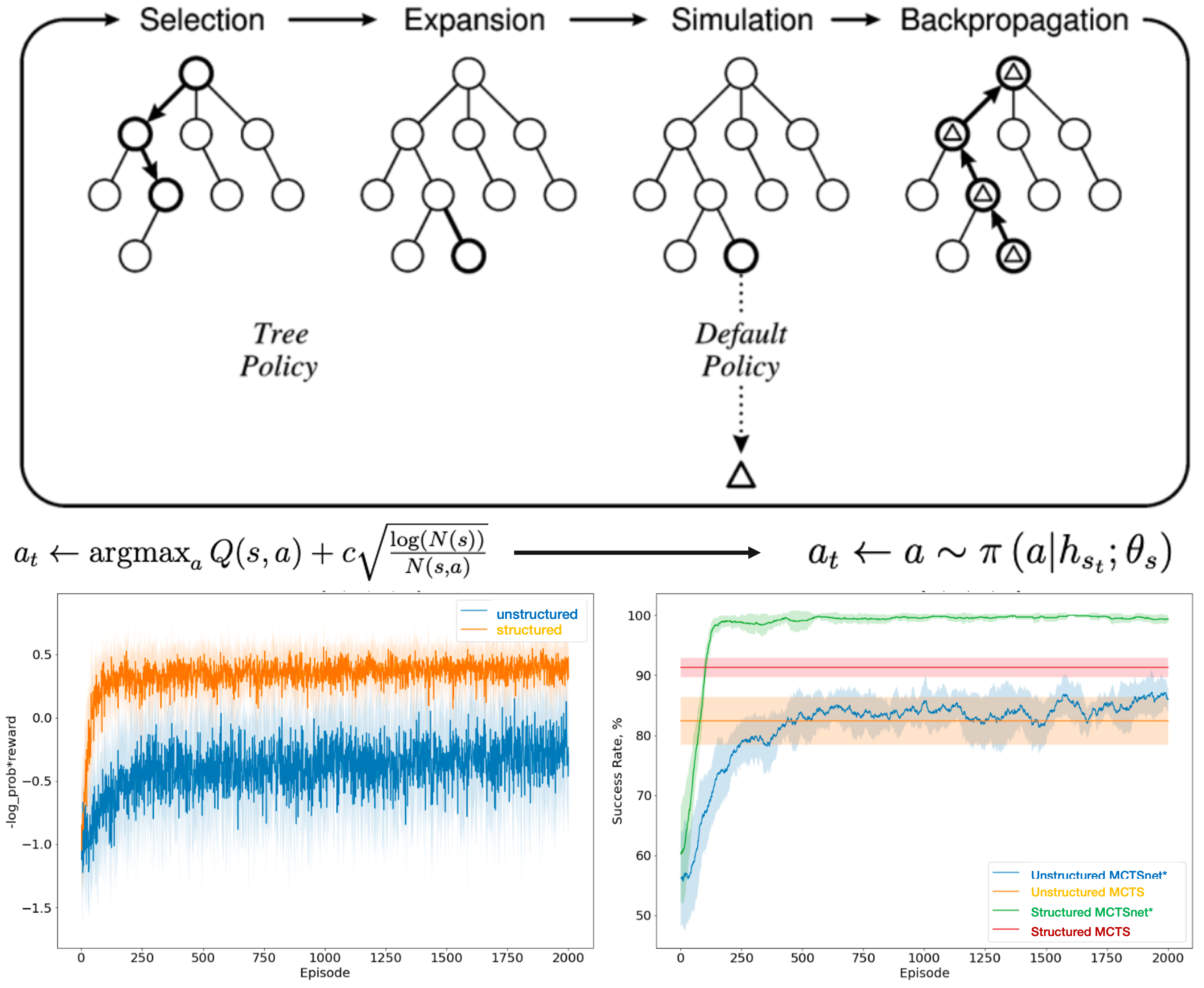

Adaptive MCTS

Applying ideas to make MCTS search adaptive via trainable neural networks (Guez, ..., Silver, arXive 2018), we find that a learnable policy network can pick up on this statistical structure to improve performance of compositional inference (work led by Turan Orujlu, Schwartenbeck et. al., RLDM 2022)

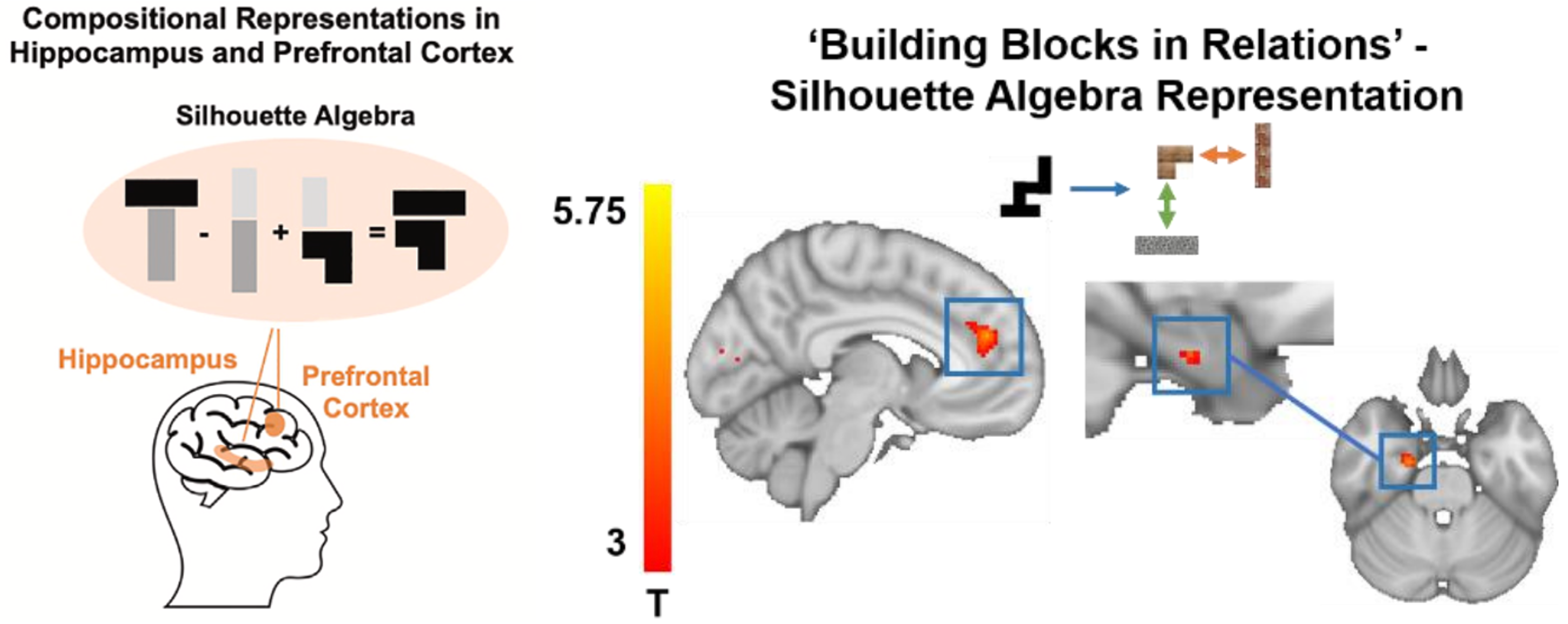

Silhouette Algebra

During compositional inference, we can use individual neural representations of certain building blocks in specific relations to predict neural representations of novel composites (Schwartenbeck et. al., Cell 2023). This suggests a factorial neural code that underlies generalisable and compositional reasoning when faced with novel tasks, similar to a scene algebra in generative models of vision (Eslami et. al., Science 2018).

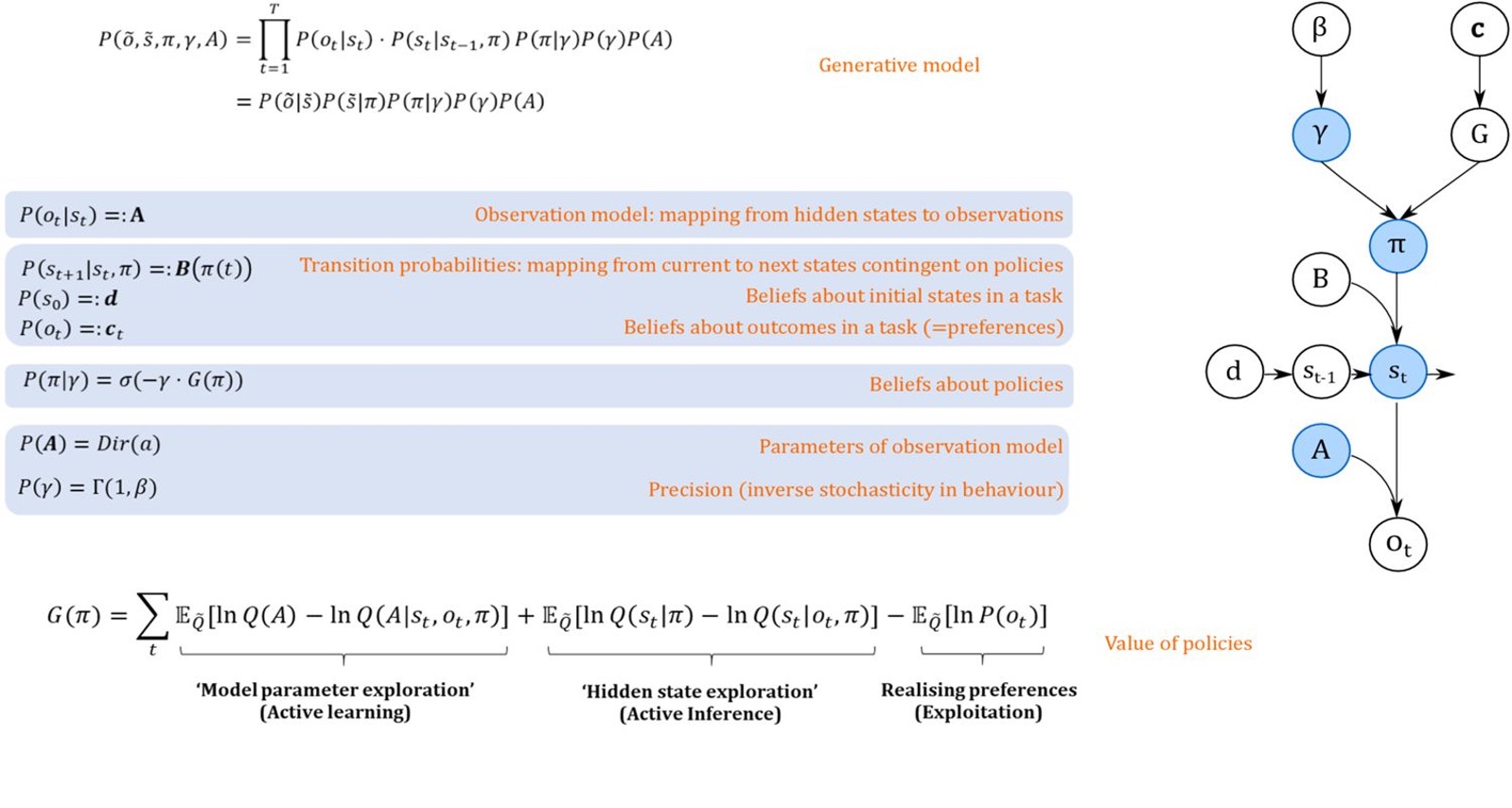

Information-theoretic planning

When casting decision making as surprise minimisation in Markov Decision Processes, we can derive different biases for maximising reward, minimising uncertainty about hidden states and minimising uncertainty about model parameters, which all guide planning behaviour (Schwartenbeck et. al., eLife 2019).

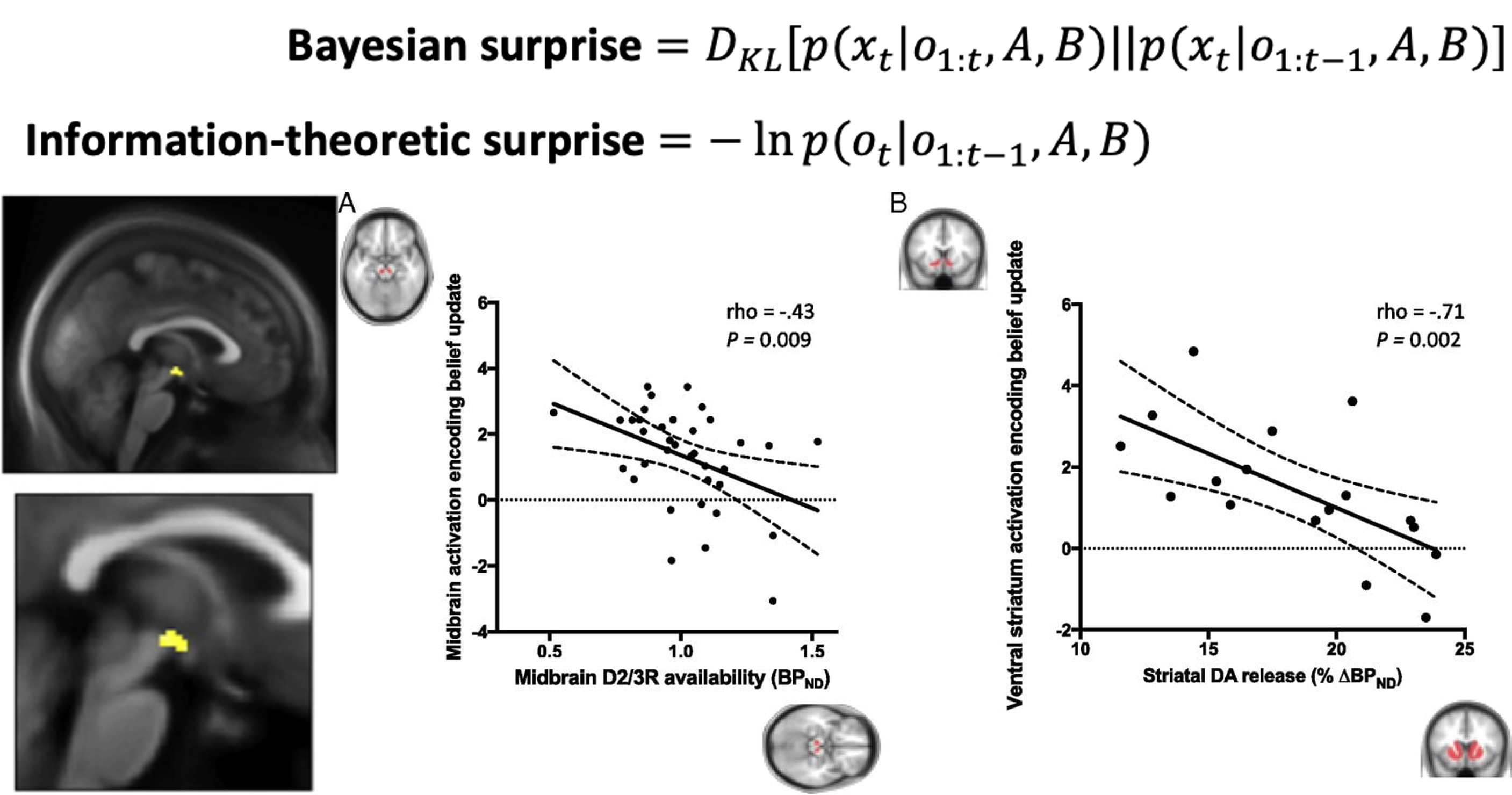

Neural basis of Belief Updating

When performing inference in Markov Decision Processes, there is an important distinction between surprising but meaningless events ("information-theoretic surprise") and unexpected events that allow agents to shift their beliefs about hidden states ("Bayesian surprise"). We find that there is a clear neural distinction between those two surprise signals, where (unsigned) Bayesian but not information-theoretic surprise is reflected in dopaminergic activity (commonly associated with signalling (signed) reward prediction errors) (Schwartenbeck et. al., NeuroImage 2016, Nour, ..., Schwartenbeck et. al., PNAS 2018).